Agouti

What is Agouti?

Agouti is a web platform designed to support the specific local projects within each pilot country where the aim is to use the recorded mammal sightings to estimate the number and distribution of different species.

This is generally done by professionals, advanced naturalists, and trained volunteers who have access to a number of cameras. If the cameras are set up according to ENETWILD protocols, the data can be used to systematically estimate mammal densities.

Autor: P Palencia/SaBio-IREC

Why should I use it?

Help us use your camera trap images to answer important questions about mammal populations.

Agouti uses a clean and modern interface, which is geared towards productivity. Anyone can use it with little training. Videos are available to demonstrate the different aspects of the system.

The app works collaboratively with all users to efficiently manage all of the different data coming in.

The data is organized using international standardized formats and can be added to open archives or other software for analysis at a later time.

You can use the app to train your own team of collaborators or to work with other researchers.

What are the benefits of its use?

Our knowledge of most mammals is still quite limited. Although there are very detailed studies on mammal biology, the information tends to concentrate on very small natural areas. It’s important to widen this very local point of view, so that we can gain more information about specific mammals in their broader environment.

-

Thanks to the information submitted by scientific citizens like you, we can better understand the distribution and temporal patterns of a wide variety of mammals.

-

This information will help us better understand the influence of climate change and human activities on changes in distribution patterns and the presence of introduced or invasive species.Wild mammals also affect the transmission of diseases affecting livestock and humans. A good understanding of mammal distribution can help to make evidence-based decisions with the aim of reducing risk.

-

When we collect enough data points through information uploaded by the users, not only can we see which species are present, but we can also have a better sense of which species may be missing in each region. This information is very important in order to understand and accurately predict species distribution and will be used to help create the next European Mammal Atlas.

We will be able to verify the information you send us with the help of experts and other members of the scientific community. This validated information will be sent to a centralized archive of the Global Biodiversity Information Facility (GBIF) and the European Food and Safety Authority (EFSA), that researchers and wildlife managers around the world can use to answer present and future research and conservation questions.

Why at GBIF?

GBIF—the Global Biodiversity Information Facility—is an international network and research infrastructure funded by the world’s governments and aimed at providing anyone, anywhere, open access to data about all types of life on Earth.

One of the missions is to promote a culture in which people recognize the benefits of publishing open-access biodiversity data, for themselves as well as for the broader society.

By making your data discoverable and accessible through GBIF and similar information infrastructures, you will contribute to global knowledge about biodiversity, and thus to the solutions that will promote its conservation and sustainable use.

Data publishing enables datasets held all over the world to be integrated, revealing new opportunities for collaboration among data owners and researchers.

Publishing data enables individuals and institutions to be properly credited for their work to create and curate biodiversity data, by giving visibility to publishing institutions through good metadata authoring. This recognition can be further developed if you author a peer-reviewed data paper, giving scholarly recognition to the publication of biodiversity datasets.

Collection managers can trace usage and citations of digitized data published from their institutions and accessed through GBIF and similar infrastructures. https://www.gbif.org/publishing-data

Some funding agencies now require researchers receiving public funds to make data freely accessible at the end of a project.

Why at EFSA?

European Food Safety Authority (EFSA) is responsible for providing the Commission and the public with independent scientific advice on food safety and risks in the food chain.

EFSA will use this information on distribution and abundance of wildlife to improve risk assessments associated with diseases affecting wildlife, livestock and humans.

How to use it?

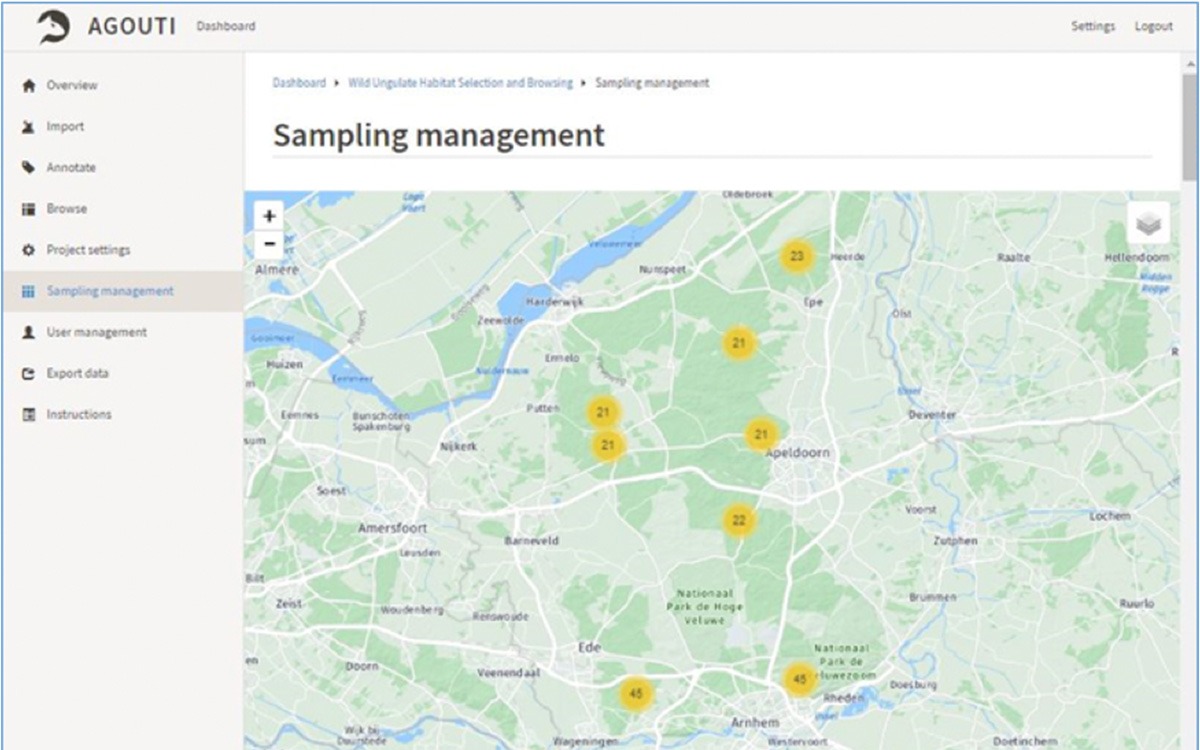

Agouti is designed for easy management and processing of images and data from camera-trap surveys. The ultimate goal is to collect data, standardize annotations, and make the data available to the wider science community. Agouti hosts a collection of projects from organizations and individuals. Projects are distinct collections of deployments – series of photos/videos from come from a single camera trap at a single location – that share a certain survey protocol.

Projects are worked on by participants in six different roles. The highest role level is Principal Investigator, who has full control over the project, and the lowest is Volunteer, who can only annotate photos that have already been uploaded. A user can have different roles in different projects. The contents within the projects are not visible to non-participants. Ownership and sharing decisions are at the project level.

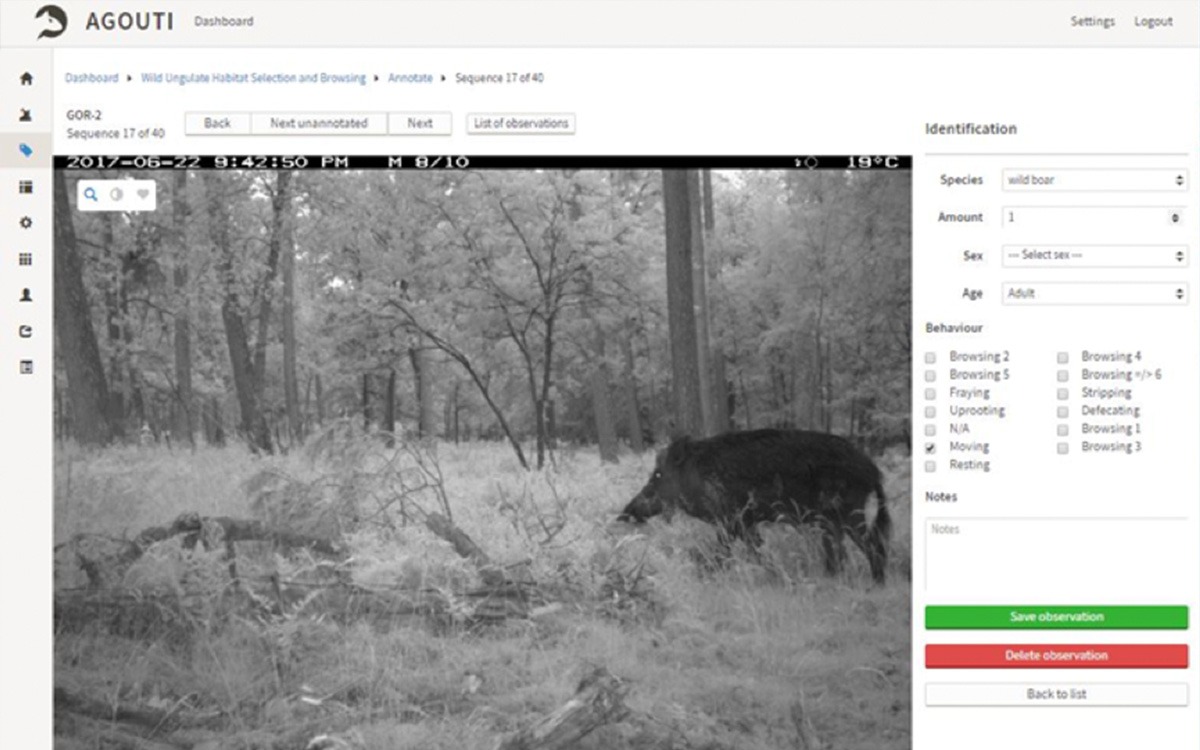

Project participants upload the data from their camera trap memory cards and manually enter the location and other metadata. Agouti then imports the images, pulls dates, times and other data from the image metadata, and uses the time stamps to group images in sequences that represent the same event (e.g. an animal passing). Images are passed through automated image recognition to identify sequences containing people. These sequences are set aside for privacy reasons. The remaining sequences are inspected by analysts who annotate each with one or more observations, using an easy interface. An interface for expert validation is in the works.

The data can be exported for analysis in various packages. We are introducing tools to summarize project data in graphs and tables. Images and data are safely archived in a university server, mirrored and backed up on two independent and physically separated datacenters. Links for transfer to a permanent archive – Zenodo – and to GBIF will be added. Project owners can choose to make data available to scientists for research.

The project will be set up so that all data can be automatically made available for sharing, and we will promote the contribution with GBIF or EFSA, using an explicit statement on the web portal.

The Agouti interface for annotating image sequences. The form on the right side allows the analyst (‘spotter’) to add one or more observations to each image sequence. The slider below the image allows users to scroll through the images or play the entire sequence as a video clip.